Flock and its customers have played a regulatory shell game for years: they claim to take pictures of license plates that are visible on public roads, and, therefore, there are no privacy or Fourth Amendments concerns. Robert Otten, Flock’s Senior Director Cybersecurity and Risk Management, just certified to the federal government his company has been lying all along.

The FBI sets rigid standards for handling “criminal justice information” (CJI). CJI includes things like criminal histories, fingerprint databases, investigative files, and so on.

These standards are mainly codified in the CJI Systems Security Policy (CSP): 461 pages of technical and operational security standards.

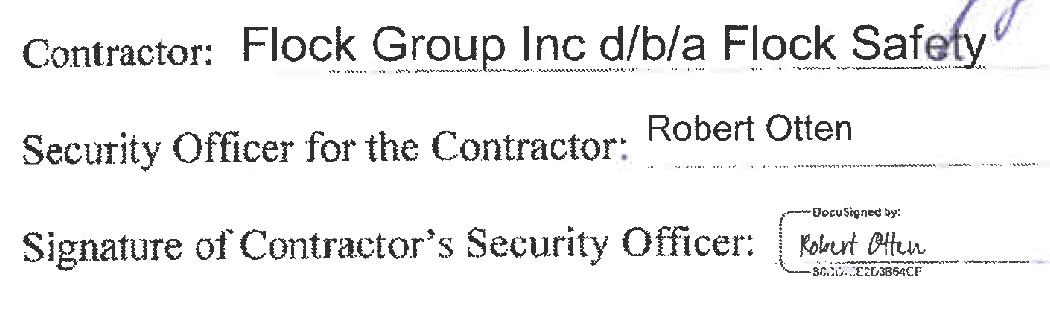

Otten signed a federal addendum certifying CJIS compliance for Story County, Iowa, invalidating the company’s years of “we don’t handle sensitive information” claims. Worse, he signed it despite knowing that Flock’s devices fail the FBI policy’s most basic security requirements.

Either Flock handles criminal justice information and is dangerously non-compliant, or it doesn’t and its security chief just lied to the federal government.

There is no third option.

Certified, Sealed, and Delivered

Despite their insistence on “public plates on public roads,” Flock and its customers have asserted confidentiality for ALPR data, audit logs, training materials, compliance, and so on, even going so far as to devise a theory of Schrödinger’s Public Record, where a record’s confidentiality hinges on whether the government has observed the record.[1]

But, the most formal step it had taken up until now was contracting with Diverse Computing, Inc., out of Tallahassee, Florida, to be awarded that company’s “CJIS ACE Compliance Seal.”

That certification is not approved or monitored by the federal government, and it is not a requirement to handle CJI. It’s a purely commercial service the company ostensibly provides as reassurance for the participant and, perhaps more importantly, their downstream customers.

Regardless of how meaningful earning a “CJIS ACE Compliance Seal” from a for-profit Florida-based institution is, there is no formal oversight of the certification program and the seal carries no legal weight. While the education and the vetting could have value, the “seal” itself is a purely performative marketing gimmick.

Now, however, Flock has gone beyond placing a seal on its website, and it has formally admitted to handling CJI. More importantly, it has committed to following the CSP’s security requirements:

This was not a document signed between well-meaning but uninformed county staff and a junior member of the Flock sales team.

Flock and the county signed this document after the Iowa Department of Public Safety investigated a complaint about Story County applying funding awarded for a criminal justice information system to a Flock contract, without having the (CSP) records to show for it.[2]

Flock’s Senior Director Cybersecurity and Risk Management, Robert Otten, sat across the table:

The 10-foot Pole Defense

Flock and the county signed this document in September, 2025, in the middle of an ongoing disclosure period during which Flock has not fixed numerous critical vulnerabilities in its systems. As Flock’s head of security, and designated “Security Officer” for the FBI, Otten was aware of these vulnerabilities and Flock’s non-compliance when he certified the opposite.

Those of us who, like Otten, have been in the tech game for a little while, will remember 2018: the year of Meltdown and Spectre. This set of vulnerabilities, formally CVE-2017-5754, wreaked havoc on security teams globally: these were highly sophisticated bugs in common hardware (mainly Intel processors) allowing an attacker to read the plaintext in memory.

These hardware vulnerabilities led to teams forgoing weeks of sleep and family time to roll out patches and secure the systems in their server environments. It was a Big Deal.

But while Meltdown required Ph.D-level knowledge, and near nation-state resources to effectively exploit at scale, Flock’s vulnerabilities require YouTube and a ladder.

In a post titled “Gunshot Detection and License Plate Reader Security Alert,” dated May of 2025—months before certifying compliance to the county and the federal government—Flock actively attempted to downplay the severity of some of these vulnerabilities.

Flock doubled down in November 2025, with its “Response to Compiled Security Research on Flock Safety Devices.” This blog post makes the bold claim that “[t]he researcher has been in contact with Flock Safety … and notified Flock of findings earlier in 2025,” but that as of November its response was that, “Overall, none of the vulnerabilities detailed in the report have an impact on our customers’ ability to carry out their public safety objectives”[3] (emphasis in original).

Flock acknowledged the vulnerabilities in its hardware platforms. Unlike Meltdown, these don’t require advanced knowledge of how CPUs work. The attack that allows you to read the sensitive information requires either (1) a USB cable, or (2) a laptop with WiFi and knowledge about how many times to push a button[4]. And unlike the entire cybersecurity community back in 2018, Flock did nothing.

Flock’s defense is that it requires “knowledge of device debugging,” the bare minimum standard for any software developer. There are easy-to-follow step-by-step instructions in the official developer documentation, and basic five-minute tutorial videos are available on YouTube.

We may as well leave the keys on the dashboard and claim car thefts aren’t a problem, because opening the door and starting the car requires “knowledge of motor vehicles.”

What’s more, although the devices are left unattended on the side of the road, Flock defends their security by arguing that they are placed “several feet above normal height.” The context is too telling to exclude:

As responsible stewards of customer data, upon notification we analyzed the impact of these vulnerabilities and subsequently have made the following submissions to Mitre for inclusion in the National Vulnerability Database.

- Debug interface enabled (CWE-1191)

- Hardcoded credentials (CWE-798, CWE-259)

- Hardcoded connection details ( CWE-798, CWE-259)

- Clear Text Storage of Code (CWE-312)

These are not material vulnerabilities, and both severity and likelihood to be exploited are low. The exploitation of these vulnerabilities require physical access to a device and knowledge of device debugging. If a person was able to gain physical access to the device (which is typically placed on a pole several feet above normal height), they would still not be able to gain access to footage, as the data is only stored for a very limited time duration on the device following its transmission to the cloud.

We don’t have to debate the definition of “limited time” when an attacker can install software with full camera and network access, nor do we have to contemplate the efficacy of height when malicious actors presumably have access to basic ladder or truckbed technology.

Whether they can be exploited, whether they require device access, whether they come on a stick or are deep-fried, those issues don’t concern the CSP, which, in federal government fashion, lays out extensive pass/fail compliance requirements. Notably, the US Department of Justice didn’t create a 5-second-rule for confidential data.

To name but a few of the areas of the CSP where Flock has been out of compliance, and was out of compliance at the time of certification:

- “Protect the confidentiality and integrity of the following information at rest: CJI when outside physically secure locations using cryptographic modules which are [federally] certified.”

- “Prevent non-privileged users from executing privileged functions.”

- “Enable user authentication and encryption mechanisms for the management interface of the [wireless access point].”

- “Place [wireless access points] in secured areas to prevent unauthorized physical access and user manipulation”

These aren’t speculative, debatable, or matters of questionable degree or urgency. These are formally FBI-classified, highest priority, violations of federal laws and regulations.[5]

Not something to cover under your finest blog bullshit.[6]

Denial, Deflection, and Dumb Sensors

Given Flock’s stance thus far, I do not expect the company to take ownership now (or ever)—they will either argue that its head security honcho, Otten, had no idea what he was doing when he signed the contract with Story County, or, more likely, it will argue that the devices sprinkled roadside are not covered by the CSP. Neither argument is plausible.

Plausible Deniability, Implausible Competence

Otten, as head of security, was aware of the highly-publicized vulnerabilities. No company puts out press releases on issues this serious without a C-suite signoff. Especially not a company whose business relies on a government clientele that tends to value the appearance of legal and regulatory compliance.

Flock’s own blog post admits that GainSec has been in direct contact with Flock about these vulnerabilities “since earlier this year” (which Gaines notes as February, 2025). Many of the issues Gaines reported were not confirmed as fixed, and some were outright confirmed as “not fixed” as of November 2025. In the middle of that period, Otten certified compliance anyway.

The alternative hypothetical “Otten is incompetent” defense centers on his failure to understand what Criminal Justice Information is, or his inability to read the terms of a two-page contract addendum.

These defenses might carry some weight if he had been a junior sales exec. They fall flat when the person signing the contract has been the company’s top security director for years, after gaining extensive experience in companies like CLEAR and KPMG.

Public Records, Private Denials

Asserting that photos, videos, and audio recordings taken in public places are not CJI is a potentially persuasive position. For it to become a credible one, it requires answering a few simple questions—among them: why did Flock agree to be bound to CSP? And why did the Iowa Department of Public Safety require Flock to do so?

Even if we ignore reality, and assume that Flock’s position has all along been that it is not operating a criminal justice information system, handling CJI, or otherwise subject to the requirements of the CSP[7], its Head of Security provided a certification of its compliance with federal CJI regulations, knowing either that the system did not comply, or that it did not handle CJI in the first place—a falsehood either way.

That action alone invites scrutiny under federal false statements statutes, and it should be seen as an indicator of the level of trust that city councils should be placing in this company.

If they’ll lie to the feds, they’ll lie to you.

Head in the Cloud

This will, undoubtedly, be Flock and its customers’ primary defense. They will argue until they are blue in the face that that the “ALPR Cameras”[8] are dumb devices that don’t process or store CJI data (except temporarily, as Flock claims on its blog) (and unencrypted as GainSec’s research found).

Let’s get that out of the way.

GainSec’s research also confirmed others’ earlier findings: the devices are powered by an unsupported development kit version of Android. Android comes standard with all the tools needed for things like wireless networking and accessing remote servers and services.

If you want to visualize what’s happening here: as a fully-functional computer system, from a security (and CSP) perspective, a “camera” running Android is not the functional equivalent of a “dumb” webcam, but the functional equivalent of a MacBook Pro[9].

Now, imagine these MacBooks are up on poles, scattered throughout cities and along rural roads, with their WiFi networks accessible to any passer-by. Imagine they are directly connected, and provisioned with credentials (not stored with encryption, and transmitted in the clear) to access a database that contains all the (claimed) CJI that Flock collects nationwide—billions upon billions of datapoints on everyone’s day-to-day movements.

And now imagine that the company’s primary response for more than six months, and counting, has been, “well, sure, that looks bad, but what you’re not realizing is that these MacBooks are on poles. Not just any old poles, but above-normal-height poles. Also, the system still works, soooo … why is this a problem?”

Even if you wanted to argue that the data collected by Flock is not CJI, the CSP governs entire systems, not individual servers. Access terminals must follow the system’s security policy.

Putting them up on a stick and leaving them out in the country is not conducive to system security.

Silver Lining, Lead Security

“CSP permits cloud services,” will be the other retort. It does. It also sets clear rules for cloud services and provides helpful, illustrative scenario-based examples.

Here’s one such example:

The cloud provider, however, does not have unescorted logical or physical access to any information system resulting in the ability, right, or privilege to view, modify, or make use of unencrypted CJI as the SaaS provider maintains the encryption keys. In this scenario, the agency would be required to comply with Section 5.12 for employees of the SaaS provider with access to CJI but would not need to do so for employees of the cloud provider used by the SaaS provider.

In other words, given that Flock employees do have access to unencrypted data, both in the Falcon devices, and likely also in the cloud services, this would plainly require its employees to comply with Section 5.12 (or, as it’s known in version 6: Policy Area PS (Personnel Security), Control PS-3).

That specific control requires state and federal-level fingerprint-based background checks, disqualifies felons, requires formal sign-off for misdemeanors, and so on.

Agencies must also have signed acknowledgements of the CSP on file for all contractor employees, they must have “Information Exchange Agreements” with other agencies in place (there is no exception for a “Nationwide sharing” checkbox), and all security vulnerabilities must be fixed within 90 days (or 15 days for P1 violations, like those reported in February 2025).

Federal Offense, Federal Oversight

Your local PD is nominally in charge of oversight. But they’re not the only ones watching.

Under the CSP, the state’s CSA and the FBI may conduct scheduled and unscheduled audits of Flock’s systems and facilities, and the government is no longer constrained and limited to angry letters, separate agreements, or investigations hidden behind the veil of corporate secrecy. And it doesn’t stop in Iowa.

Because Flock is organizationally, architecturally, and operationally monolithic, CSAs in other states are authorized to act. They have a few options:

- They can audit or investigate Flock customers and the Flock system for facilitating an unauthorized connection to a criminal information (SA-9, “External System Services”).

- Regardless of contractual ownership provisions, CJI is statutorily owned by the FBI or state; a CSA is authorized to inspect how it’s processed.

- As the “friendliest” option, one specifically designed with vendors like Flock in mind, the CSP provides a reciprocity framework for audits. A CSA may request another CSA audit the system, and CSAs may rely upon each others’ audits. (SA-9(c)(4))

If you are in a state where Flock is used, your state’s CSA can act, should it choose (or be forced) to do so.

The End of the (Root) Shell Game

Flock has played its regulatory shell game to the point of absurdity: telling cities it’s just a camera, telling police it’s a crime-fighting supercomputer, and telling the public that their own private information is a commodity to be bundled and made available in a Spotify-like subscription package (because we promised we won’t sell it!). By signing this federal addendum, the music stops.

They have admitted their devices are terminals for a federal criminal justice network. They have admitted their staff requires the same vetting as a detective or an analyst. And thanks to their own sloppy engineering, they have demonstrated they cannot meet the standards they just swore to uphold.

The question now isn’t whether Flock is compliant; it’s which state Attorney General will be the first to prosecute, and will they prosecute Flock, or will it be the cities and police departments under whose watch this all occurred?

To encourage your Attorney General in their efforts, start requesting and forwarding evidence of local agency CJIS (non-)compliance: security addenda, management control agreements, certifications from Flock employees, audit results, review schedules, et cetera.

Local agencies can either produce the security addendum, in which case Flock’s failure to address the glaring security holes stands as irrefutable evidence of non-compliance and failures of oversight, or they can’t produce the documents, in which case they are running a non-compliant connection to a system Flock swore was secure.

Flock must pick its poison.

The Receipts

- Criminal Justice Information Services Security Amendment between Story County and Flock (9/15/2025)

- Criminal Justice Information Services (CJIS) Security Policy v6.0 (12/27/2024)

The Washington court hearing the argument would have none of it. ↩︎

This author, who also discussed CJIS matters with the county attorney before the addendum was signed. ↩︎

Which, of course, directly contradicts its customers’ position when responding to public records requests; the information must be withheld because its disclosure would jeopardize ongoing criminal investigations. ↩︎

For more in-depth technical information, see Jon “GainSec” Gains’ whitepaper, Examining the Security Posture of an Anti-Crime Ecosystem. ↩︎

CSP References: SC-28, SI-7, AC-6(10), 5.20.1.1. ↩︎

Though it should be noted “finest” is relative; I can appreciate a good bullshit, but Flock consistently fails to deliver even on that promise. ↩︎

And, therefore, the information is also not exempt from public records (FOIA) laws. ↩︎

Scare quotes because there is nothing particularly “ALPR” about cameras that take pictures and use ML or other AI to process them. ↩︎

For the technically pedantic reader: yes, it is both technically and functionally equivalent to a Linux server, but those don’t make good visualization aids. ↩︎